Facial Recognition: How to Find a Face

Teaching computers facial recognition is hard to do, but the reasons we're trying have a lot to do with why faces are important to us.

You’re walking through a crowded train station, bustling travelers, changing destination signs, blinking lights, flickering monitors, and all manner of motion surrounds you. Amidst all of it, you notice one face that you actually recognize. While we find this process natural, facial recognition is a system with many stages and mechanisms that scientists are still trying to understand. And while we continue to learn how humans recognize each other, we’re attempting to teach computers how to do it. The truth is, facial recognition is turning out to be more difficult than we imagined.

So why are we doing this, and how will it work, if it works?

A Convenient Truth

We already have ways of identifying people: fingerprint matching, DNA, retina and iris scanning, and even voice recognition are in use and increasingly common. None of these methods are how humans recognize each other, and this can lead to mistakes. In the case of fingerprints, the level of detail often used to take fingerprints may not actually look different to a human or computer observer. Some pretty dramatic mistakes have resulted from relying on fingerprints as a reliable identity marker. DNA evidence, often seen as infallible on cop shows, has caused massive problems in the United States justice system, creating confusion for jurors and sending the wrong people in jail. All systems are fallible, but when people can’t double-check the results themselves, there is more risk that an identity system may have undetected failings.

Compared to these other methods, facial recognition has some features to recommend it. First of all, it’s convenient. Throughout much of the world, people don’t cover their entire face, making face recognition widely applicable and comparatively non-invasive. Done right, it doesn’t even require a subject’s cooperation to collect their data. On the other hand, unlike DNA and fingerprints, a person’s face changes. People age, get haircuts and have a wide variety of facial expressions that can morph the shape of the face. Why wouldn’t we choose something easier? It turns out that when it comes to our vision system, faces have a special role…

What’s in a Face?

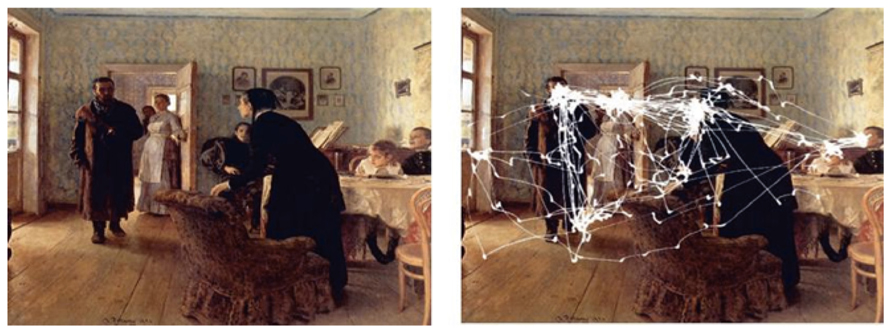

In 1965, Soviet scientist Alfred L. Yarbus proved what other researchers had long suspected: that the eye does its essential business of seeing through motion. When we are shown an image, our eyes dart around on a rapid fact-gathering mission, filtering out the visual noise and zeroing in on the most important details – those that will tell us what (or who) we’re looking at. Given more time to look, we do not turn our attention to an unexplored corner, but compulsively reinvestigate those elements that “allow the meaning of the picture to be obtained.” The element humans go back to most often is the face.

That’s because we gather a lot of information from faces – the identity of the people we see as well as other information – gender, age, and how they are feeling. From childhood, we look at others’ facial expressions for clues on how to react to unfamiliar situations. When looking at faces, we follow similar visual patterns, looking at (in order of importance) the eyes, mouth, and nose. Though this task seems innate to us as social animals, it wasn’t until 2012 that researchers at Stanford University were able to find a part of the brain that is responsible for recognizing faces exclusively: the fusiform gyrus. While other parts of the brain are active initially when we look at faces – recognizing each feature as individual pieces – it’s this fusiform area that assembles the information holistically to understand the whole face. The relationship between these key features then helps us form an essential “faceprint” in our mind based on a fraction of the available information.

As we’ve seen in mantis shrimp, the visual system can be optimized to solve for both hard and soft constraints. Being able to limit attention to the decisive visual features of a face allows processing to happen more quickly, as well as get around the biological bandwidth problem: our eyes can capture a lot more information than can actually be transmitted over the optic nerve. The ganglion cells in the human retina can produce the equivalent of a 600 megapixel image, but the nerve that connects to the retina can only transmit about one megapixel.

The darkness of the eyes and the brighter bridge of the nose is used to detect a face. You can see in this video that while the detection method is not very sophisticated, computers are very fast, and so can use their speed to identify faces in short time frames with a high degree of accuracy.

Making a Face

To build face detection software, researchers were able to use what had been learned from our biology to make new technology. All human faces share some similar properties that can be used to establish “this is a face” and then to determine which face it is from an existing database.

Properties that identify a face include:

- The eye region is darker than the upper-cheeks.

- The nose, forehead, and cheeks are brighter than the eyes.

Properties that identify a specific face:

- Location and size: eyes, mouth, bridge of nose

- Value-oriented gradients of pixel intensities

While this approach, at its most basic, can be very effective for static images using a significant database (see Google’s face-matching Arts & Culture app, and Facebook’s automatic face search), things start to fall apart in more real-world applications like surveillance. Despite its apparent success in movies and television shows, rough camera footage usually has variations in lighting, angle, image noise, frame rate, resolution, and even variations in subjects from day-to-day making real-time recognition very difficult, though new technologies are still being developed that may end up justifying CSI detectives after all.

A Good-Looking Industry

Research, invention, and innovation will continue to evolve to solve these problems (and the next ones). Analysts predict that the global facial recognition market is expected to grow from USD 4.05 Billion in 2017 to USD 7.76 Billion by 2022. Companies are very interested in the possibilities of facial recognition technologies and global security concerns are driving interest in better biometric systems. As both independent technologies and sophisticated services, we’ll see governments, security organizations, militaries, retail corporations, and healthcare institutions looking for ways to move them forward.

In Part II, we will explore some of the challenges of real-world facial recognition, and how a combination of software and hardware, with 3D and multispectral solutions, point the way to success.

Show Me the Money

Show Me the Money  Add Vision to Robots — See the Difference

Add Vision to Robots — See the Difference