Imaging Inside Out: SWIR for Apples

Changing markets drive new investment in a broader spectrum of machine vision for food production

Food. The food industry forms the economic backbone of many countries, both for national consumption and export. Each contributes to jobs and livelihoods around the world and is an expression of culture and values. It’s also essential to our survival.

And it’s changing. While it’s still too early to measure the true impacts of COVID-19 on global trade and consumption, many other factors are driving changes to food production, particularly climate change and population growth.

Some food supply can easily continue with little interruption. While there are more than 50,000 edible plants in the world, just 15 of them provide 90% of the world’s food energy intake. Of these, rice, corn, and wheat comprise nearly two-thirds. These core staples are easy to store and process. Already, many U.S. farmers producing wheat and rice have been able to rely on mechanized tools and processes that limit human-to-human contact and meet CDC guidelines for safe operation, even under pandemic conditions. As a result, the International Food Policy Research Institute reported in March 2020 that the pandemic does not currently pose a major threat to global food security; adequate stores of these staples remain.

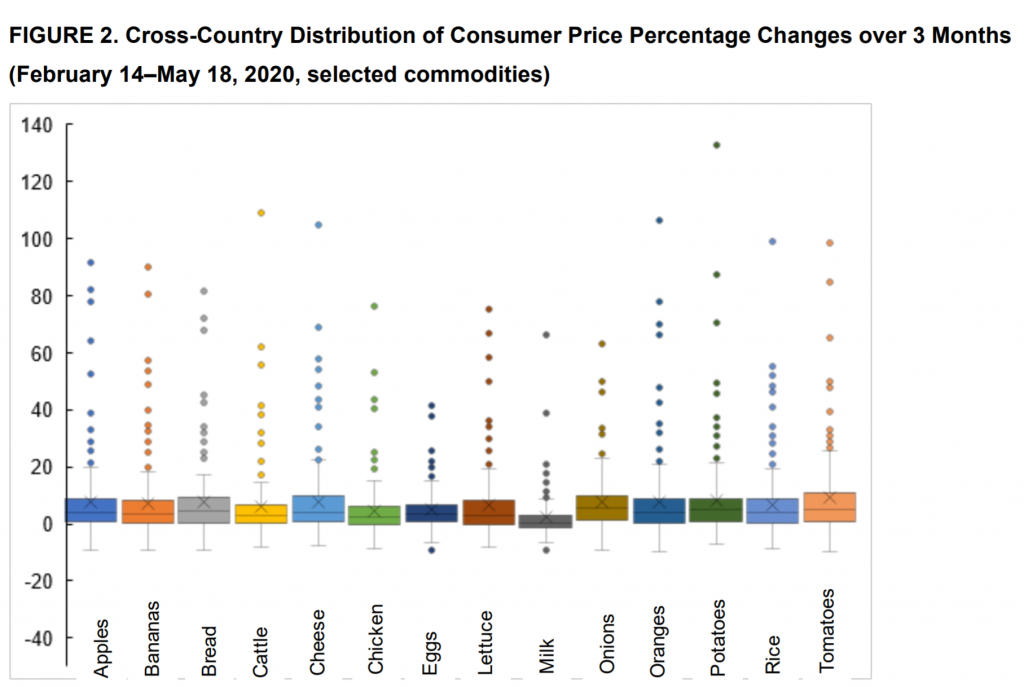

But higher-value, more specialized crops will face a greater number of hurdles. Fruits and organic produce are grown by smaller farms and require more labor. According to the UN and IMF, measures to prevent outbreaks will disproportionately affect these farms and potentially create price spikes that hit restaurants and consumers further down the supply chain. With higher costs across the board, there’s already evidence of increasing fruit prices more than offsetting lower vegetable production.

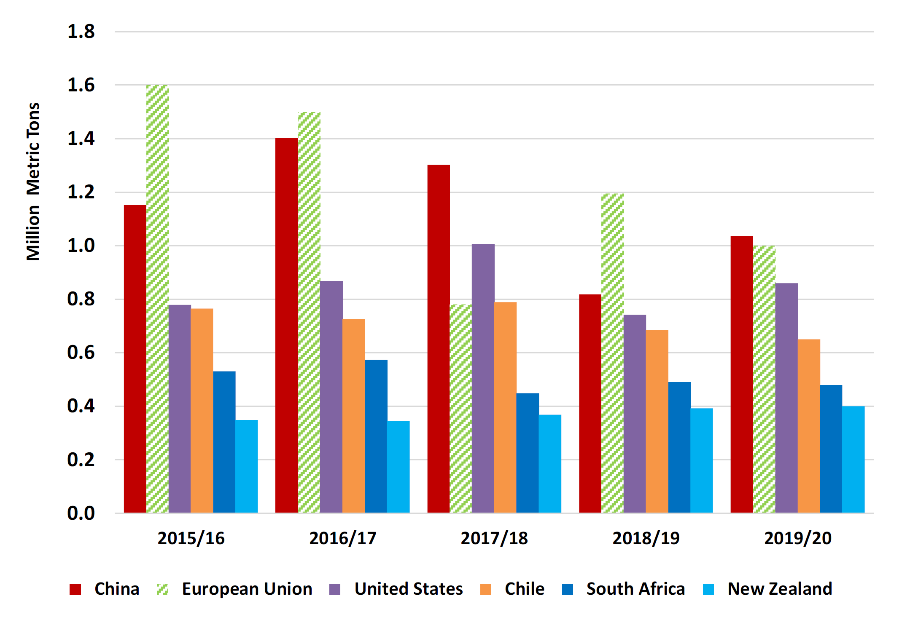

A great example is apples, one of the most economically and culturally significant fruits in the world today, grown in every temperate zone around the world. They’re also a high-value fruit that requires large crews for planting and pruning to ensure healthy trees and large harvests. Apples are a big business, with major players spread around the world.

Pardon the pun, but apples are also a growing business! Even during the pandemic, world production for 2019/20 is estimated to rise nearly 7% to 75.8 million metric tons. China has rebounded from the frosts of 2018/2019 this year, growing production by 24%, making it again the top apple exporter, and offsetting lower production in the European Union due to a combination of frost, drought, heat, and hail. U.S. production is estimated to have increased more than 300,000 tons to 4.8 million on rebounding output in top grower Washington state, resulting from favorable summer weather. Higher quality supply – and more of it – is expected to further boost exports to key markets.

Over the last several years, demands on the industry have evolved toward better quality, improved food safety and traceability. Higher demands for the treatment of labor, lower energy and water consumption, and safer agrichemicals have also steadily hiked productions costs. This has put significant pressure on growers, processors and retailers to adapt their supply chains. Recent developments in precision agriculture, molecular biology, phenomics, crop modelling and post-harvest physiology should increase yields and quality and reduce costs for temperate fruit production around the world.

Delivering a Better Apple with Vision

If apples are going to be more vulnerable, scarce, and expensive, how can machine vision help?

People, as always, are inconsistent both individually and internally, and from day-to-day. Traditional inspection – humans manually cutting open and examining produce – is destructive, laborious, time-consuming, costly, and subjective. In contrast, imaging systems allow for high-speed, non-destructive quality inspection and grading. Visible, multispectral, and infrared imaging technology is already being deployed to varying degrees across fruit and vegetable grading systems. Still, automatic quality and grading inspection is challenging which slows its adoption in robotic fruit and vegetable grading systems.

The challenges are multifold:

- Physical and biological variability (there are thousands of varieties of apple in the U.S alone)

- Surface detection on uneven, rounded objects that vary significantly in size and shape.

- Discrimination between defects and natural features

- The reliability of the algorithms to date

- The conflict between speed and accuracy in any optical detection system

What You’re Looking for: Defining Quality

Looking at these challenges, it turns out that “quality” is difficult to define. It’s not one single well-defined attribute, but instead many characteristics that define a quality fruit. Research has shown that among relatively similar cultures, , even when the qualities are relatively consistent, the way that quality is recognized varies significantly. When more diverse groups are asked, culture consensus on ‘quality’ falls apart very quickly. These kinds of cultural differences are found all across research (it can get really wild when you look at ‘happiness’) but force us to look at specific and more easily quantifiable aspects of a given apple.

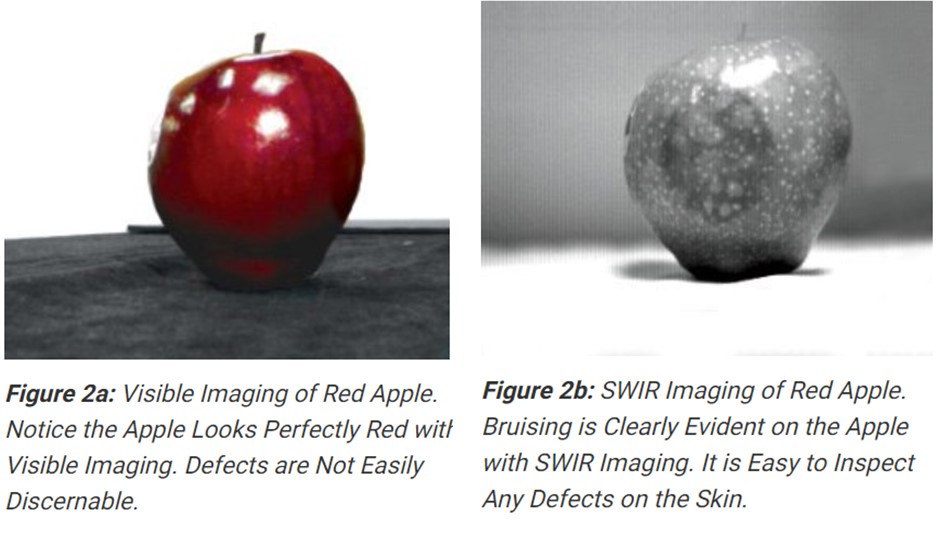

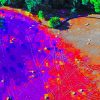

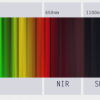

That’s only slightly less difficult. We’ve bred apples to look good to us. More than that, visible light tends to stop at the skin of the fruit, the pigments and chlorophyll and other bands of light can be scattered by the underlying structured tissues. By moving to NIR and SWIR, you can see water density and distribution more clearly inside the apple. This indicates key physical attributes that will help predict the measurable ‘quality’ of the apple, texture, water binding capacity, and specific gravity.

Abnormalities in texture and density can indicate bruising, which is particularly important for quality inspection systems. “Bruising” is damage to fruit tissue from external forces, compressing, cutting, or abrading the skin and flesh of the apple. This causes physical changes in the texture and chemical composition on the fruit, with immediate and long-term effects on color, smell, taste, and longevity of the product.

The susceptibility of apples to mechanical damage depends on many factors, including soil cultivation, nutrition, and weather conditions in the field during fruit growth, all of which have become more complex and extreme over the last few decades. Harvesting and transportation themselves can introduce bruising to the product, and sticks and other debris in the production line can be equally damaging.

With optical sorting, bruised product can be caught and removed. In fact, with more efficient sorting there is less likelihood of waste – apples can instead be sorted by their suitability for other products, like jams, preserves, and frozen mixes. Even though bruising is the top reason for rejecting fruit in sorting lines, automatic sorting systems still often lack precision in detecting bruises, driving companies to fall back on human/manual sorting methods.

Infrared imaging for shelf life

So how do we achieve higher precision? We’ve seen how short-wave infrared imaging can help enable precision farming of crops in the field.

But once a crop is harvested, the need changes. A primary challenge to the plant food industry is the almost universal fact that the shelf life of plant foods is normally short. Generally a shelf life of about seven days is required for domestic consumption and 7-15 days for overseas consumption. Through postharvest processing, storage, and transportation, the physiological qualities of harvested foods continue to change.

In 2007, researchers were able to use NIR/SWIR measurements to distinguish between apple varieties and between storage type and storage duration. Later, researchers used IR spectroscopy to examine the eating and sensory quality of apples (or what humans refer to as taste and texture) after 6 months of air or controlled-atmosphere storage. However, both these studies were not predictive; they only examined the apples after storage. It took larger improvements in infrared imaging technologies to take the next step.

For industrial evaluation that can inform production decisions, determining fruit quality needs to go beyond the immediately visible identifiers that consumers would rely on in the store, such as shape, size, color, texture and defects. Fruit producers need to look at the non-visible features that indicate future quality and longevity: sugar content, firmness, soluble solids content, and nutritional content. To do so, researchers created a system that incorporated imaging of reflected radiation across the visible, SWIR, and MWIR imaging ranges; 400 to 5000 nm. It turned out that the whole spectrum is useful for detecting bruises. The deeper into the infrared range the imaging stretched, the deeper into the tissue of the apple the researchers could evaluate.

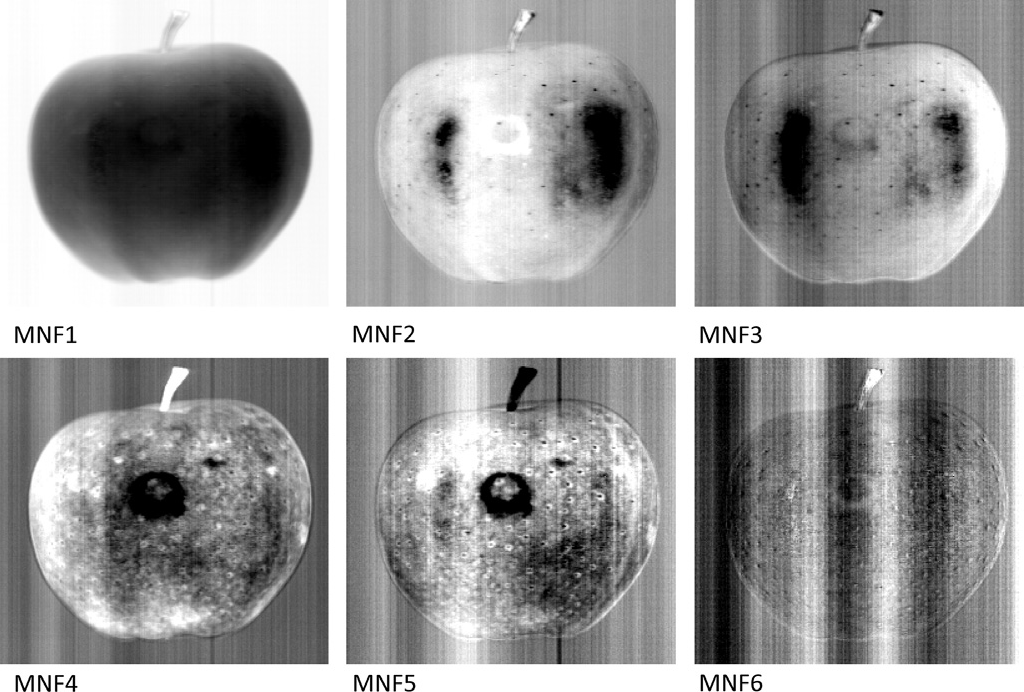

Clearly, a more comprehensive approach was needed to power production decisions. Researchers then moved to hyperspectral image analysis that included both NIR and SWIR wavebands, giving different ‘depths’ in the target image, the apple. Adding MWIR imaging proved useful for more extensive bruise recognition.

This was a lot of data to use simultaneously, particularly because the target object was not 2D, but 3D. Even better results were obtained by the use of Minimum Noise Fraction transform (MNF) rotation to determine the inherent dimensionality of the image data, to segregate noise in the data, and to reduce the computational requirements for subsequent processing MNF transformation whose components could be preferable for image segmentation purposes.

The analysis of the total scores for individual ranges (VNIR, SWIR or MWIR) indicated lower prediction values than in cases where these ranges were included jointly into models. The created models of supervised classification based on VNIR, SWIR and MWIR ranges indicate that best prediction efficiency for distinguishing bruised and sound tissues as well as bruises of various depths is obtained for models incorporating these three ranges together. This suggests that it would be reasonable to consider including MWIR range into sorting systems.

Great, how do we do that?

….to be continued in Part II

The Long Wavelength Infrared (LWIR) camera market heats up

The Long Wavelength Infrared (LWIR) camera market heats up  Seeing the unseen: how infrared cameras capture beyond the visible

Seeing the unseen: how infrared cameras capture beyond the visible