MEMS and Micromachines of Guiding Light

Lidar has been a challenge for carmakers hoping to make autonomous vehicles a reality. Has MEMS more options to consider?

The ability to interact with surroundings at a smaller scale was always a goal set by Richard Feynman with his seminal paper “There’s Plenty of Room at the Bottom”, which challenged researchers to explore the micro and nano worlds.

In the late 1960s, researchers were working on the foundations for present day microelectronics, which paved the way for the miniaturization of mechanical systems. The development of micromachining technology was based on silicon semiconductor fabrication technology. Sensors and actuators could interact with the environment on size and time scales not previously possible, detecting motion, analyzing minute chemical samples, and manipulating light beams with speed and accuracy.

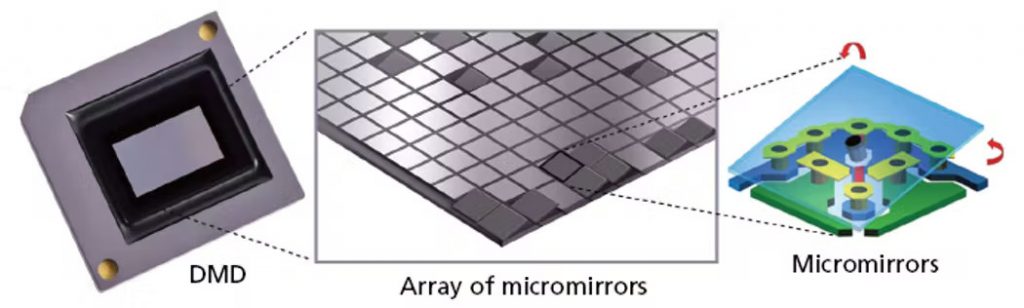

The idea of creating MEMS started in the 1980s, before the acronym was coined, but which started with Kurt Petersen’s “Silicon as a Mechanical Material.” The original exploration began with employing equipment built for the semiconductor industry, which created many unique approaches. MEMS now is a more mature technology, with specialized equipment designed to accommodate the unique requirements of MEMS devices. These high volume manufactured products have been successfully integrated in numerous products such as microphones, pressure sensors, inertial sensors (such as accelerometers and gyroscopes), and micromirror arrays.

In many cases, the move to MEMS-based sensors was motivated by greater efficiency compared to larger options. Commercial MEMS products can be integrated and packaged together with integrated circuits, allowing the sensor and actuator to share the same substrate. For MEMS actuators, high positioning speed, low power consumption, and high reliability can be achieved. By combining actuator mechanisms, sensors for position feedback and microelectronic circuitry complete highly miniaturized sensors and actuator systems can be realized on the same chip at a low cost.

This has allowed MEMS technology to spread from industry to industry and application to application. Starting with early pressure sensors (which are still widespread in the automotive industry) to inertial sensors for airbag deployment, to Digital Micromirror Devices for display, to microphones and micromachined speakers driven by the explosion of voice-activated IoT devices in both consumer and industrial markets.

Today, most people interact with MEMS daily. A new car will have dozens of MEMS based sensors, and new mobile phones will contain accelerometers, gyroscopes, magnetometers, pressure sensors, and microphones. As MEMS become smaller, require less power and are less expensive to manufacture, they are expected to play an increasing role in the wireless internet of things (IoT) and home automation.

Today, some of the most exciting new opportunities exist in the field of optical MEMS.

The Optical Opportunity

Twenty years ago, optical MEMS were largely directed toward telecom applications. Even today, silicon-structured MEMS micro-mirrors are the largest subset of the market for optical MEMS devices. They’re generally used to provide steered geometric reflection of laser beams, used in information and communication technologies (such as large-port optical cross connects for cloud data centers). The high fidelity of single crystal silicon based geometric steering mirrors enabled signal transfer a laser signal from port to port where previously a large system of filters, detectors, amplifiers and electronics to drive another laser at the second port to direct a signal from one port to another was needed.

Rather than geometric steering of a mirror, techniques have been developed around diffractive manipulation of light. Commercial diffraction gratings were first made in the 1940s, and this technique made many laboratory instruments possible. Fixed gratings for creating spectra or filtering telecom wavelengths are invaluable and part of the optics equation which allows MEMS to realize the optical networks of today. When MEMS moving parts are made at the scale of the wavelength of light, the small distance of travel and capability of addressing individual elements of a MEMS grating array allows the fabrication of a high-speed programmable grating. In this way, the grating can be selectively turned on and off on demand. It is safe to say that if you have read a magazine in the last 25 years, the printing plate for it was imaged through high-speed diffraction imaging of high power lasers, and a large plate can be imaged to ten microns resolution in minutes through manipulation of gigabytes of information of on/off grating signals across the media.

This ability to manipulate light has opened many new applications and has attracted growing interest in using MEMS for other applications, such as biosensing, quantum sensing, and miniature optical scanning for virtual and augmented reality.

One of the most demanding applications for MEMS may be autonomous driving, with high requirements for reliable environmental sensing and localization, which requires corresponding sensor technology—such as inertial sensors and optical components for lidar.

The Advanced Driver-Assistance Systems (ADAS) Challenge

The advancement of autonomous vehicles, especially unmanned aerial vehicles (UAVs), has fueled demand for lidar that is higher performance, cheaper, and more efficient. According to McKinsey and Company, the global ADAS and AD system sensor market will be worth US$43 billion by 2030. The fastest growing segment—and biggest bottleneck—remains to be the lidar systems.

The technology choices made today will have immense consequences for performance, price and scalability of lidar in the future. The present state of the lidar market is unsustainable because winning technologies and players will inevitably emerge, consolidating the technology and business landscapes.

Lidar: The ADAS Sensor of Choice?

Today, motorized optomechanical LiDAR moving laser scanners are the most common type. This type of scanner can be developed with a long ranging distance as many individual lasers are used, a wide field-of-view (FoV) and fast scanning. Variants are built with multiple channels of transmitters and receivers stacked vertically and rotated by a motor to generate a full 360° FoV with multiple horizontal lines. Such LiDAR systems are not power-efficient, and their moving parts make them vulnerable to mechanical interference. The need to rotate means that only a portion of the FoV is being covered at one time – a particular challenge to vehicles that are vulnerable from any direction, so it is analogous to the World War 2 radar rotating dish and the associated viewing of the enemy squadron position showing on each rotation.

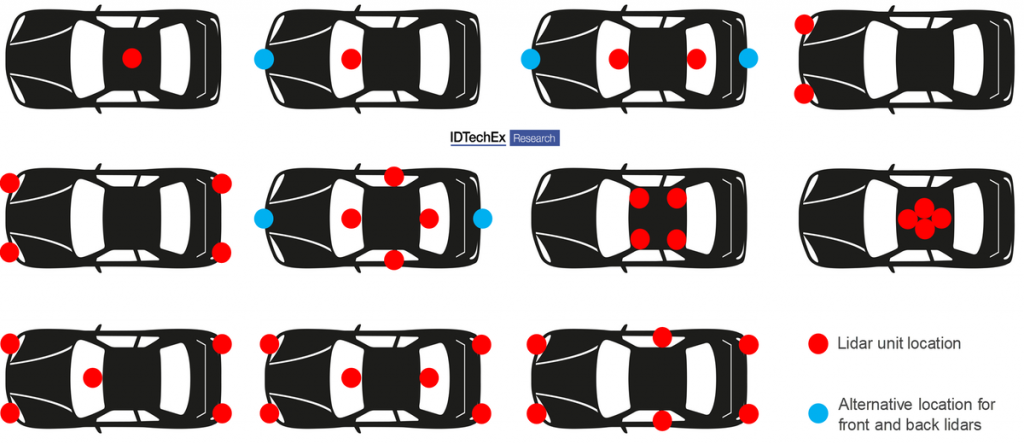

Their vertical resolution is fixed, dependent on the number of transmitter and receiver channels: a higher vertical resolution means higher cost. This has confined Lidar primarily to airplane or vehicle-based systems. A lidar system is often the most expensive component of a self-driving car. But there are almost two billion reasons to think that might change: 3D lidar start-ups targeting the autonomous vehicles market have secured $1.9 billion USD in funding for product development.

Automotive companies are exploring many solutions simultaneously. The first company to arrive at a fully-functioning ADAS will have a significant competitive advantage. There are many systems that make up an ADAS, but from the beginning, sensors have been a key component. Since it helped the Stanley robot win the DARPA Grand Challenge for autonomous driving, lidar has been part of the conversation.

Over time, Lidar development has been slower than expected, and systems remain expensive. This has lead many to claim that Lidar is on its way out, and that more established 2D machine vision systems, backed by a lot of deep learning, might be a better solution. Tesla CEO Elon Musk even called lidar a “crutch” and claimed any autonomous vehicle developer using it is “doomed.” Tesla then went as far as removing the onboard radar sensors from its latest models, relying on the vehicles’ suite of cameras, ultrasonic sensors, and millimeter-wave radar…which prompted the US National Highway Traffic Safety Administration to downgrade the vehicles’ safety ratings in 2021.

When it comes to sensor systems for vehicles, the focus has been on level 3 driving systems and above. At Level 3, Conditional Driving Automation, the vehicle has environmental detection capabilities and can make informed decisions but still requires human override for some situations. The driver must remain alert and ready to take control. The level of automation continues from there to 4 and then 5 which culminates to fully automated driving that doesn’t require any human attention to drive. The investment in this category is huge, with the global market for 3D lidar in level 3+ autonomous vehicles predicted to grow to $5.4 billion by 2030.

Interestingly, the same ID TechX report predicted that MEMS lidar would emerge as the largest market segment. What could MEMS have to do with Lidar?

MEMS to the Rescue?

To prove Musk and the other doubters wrong, Lidar maker Luminar posted the video results of a head-to-head test of a lidar-less Tesla Model Y and a Lexus RX armed with its own lidar system. The Lexus was the clear winner in the rather morbid “Would it run over a small child?” competition. Despite the win, and despite products first being announced in 2018, the Luminar lidar system is still confined to concept cars in 2022.

In the myriad of available approaches to LiDAR, there seems to be many ways to approach the problem. A moving laser system affords high power (long range), but sacrifices speed, and small waveguide-based systems or liquid crystals that use phase steering cannot as easily accommodate high power lasers. Is there a way to harness the advantages of lidar with the cost and efficiency standards that a mass-market product like a car would require? McKinsey thinks that a solid-state solution to Lidar steering will make that possible.

LiDAR manufacturers are working with a number of approaches that all have either cost or performance compromises. System designs are not as varied as the number of manufacturers might suggest, but many solutions to the problems being solved exist. Completely silicon based systems are attractive for cost and reliability. Cost reductions may be realized in Frequency Modulated Continuous Wave (FMCW) in the longer wavelengths than silicon that would make it more competitive.

Mechanically- or magnetically-steered mirrors contrast with steered MEMS mirrors, and have a different set of advantages and disadvantages. In the operation of a MEMS mirror-based LiDAR, only the tiny, mirrored plate (whose diameter is in the range of 1–7 mm) of the MEMS device moves while the rest of the components in the system are stationary. This allows a more compact design compared to a mechanically moving laser. The size is still less than the desired beam size for the scales needed in automotive LiDAR, so designs push the limits of cost efficiency for MEMS manufacturing and the system requirements.

The most critical characteristics of MEMS mirrors lie in the fact that they are small and steer light in free space. Compared to motorized scanners, MEMS scanners are potentially superior in terms of size and scanning speed but reaching as wide steering angle as galvo driven mirrors is difficult.

Solid-state beam scanning uses optical phased arrays, requiring no moving parts. While the systems are capable of high performance within a smaller power envelope, the FoV of each laser is quite small, sometimes requiring hundreds of units and a way to combine all the available information.

Because of that, some companies are exploring believe the key to making lidar affordable and reliable for autonomous vehicles is to move toward solid-state designs with no moving parts. That obviously requires some mechanism for steering a laser beam in different directions without mechanically moving the laser or any macroscopic mirrors. MEMS scanning mirrors and optical phased arrays (OPA) are the two most investigated approaches to solid state scanning lidar. In the case of MEMS scanning mirrors, though technically still a mechanically scanned system, the miniature scale of the scanning mirror, small inertia and high resonant frequency make it more robust to harsh mechanical and temperature environments while the microfabrication approach is suitable for scaling down price through large volume production.

It is interesting to note that one of the newest offerings in LiDAR is from Velodyne, historically a company based in moving laser techniques. They have now released the Velabit, a smaller but impressively specified system. The power/range is less than their multi laser system, but the simplicity of the system might promise a reduction in price that allows multiple units to be integrated into a vehicle.

Illuminating a Bigger World of Optical MEMS

More companies are exploring MEMS solutions, which might enable a solution that ticks all the boxes. In the end, MEMS-based mirror devices perfectly embody the key advantages of the MEMS approach: price, miniaturization, scalability, efficiency, and speed. Accuracy, flexibility, and software control seem to be the next hurdles to overcome. This may put MEMS-based approaches to lidar steering on the right side of disruption, where the market-dominant solution initially comes in cheaper and perhaps not quite “good enough,” just waiting for scale and some innovation to allow it to eat the rest of the market.

MEMS definitely has the opportunity for scale. As big as the automotive opportunity is, and as quickly as the industry is moving, there’s a lot more going on. The usage of microphones, inertial sensors, and optical MEMS devices are projected to keep growing in personal electronics, industrial, and automotive applications, with the whole market reaching US$18.2 billion by 2026, (~7% CAGR). The driving forces will continue to be size reduction, cost reduction and performance increases, particularly in consumer electronics. As we’ve seen so often, trends in one industry become opportunities in others.

MEMS are here to stay; the question remains “which industry will they revolutionize next?”

Night-sight: Competing technologies for the vision systems in autonomous vehicles

Night-sight: Competing technologies for the vision systems in autonomous vehicles  Keys to convenience: the technologies driving autonomous vehicles today

Keys to convenience: the technologies driving autonomous vehicles today